Programming and Computing - ShellScript - Linux

Installing Linux on TBao Laptops - PartedMagic

PDF Documents and Plugin

Embed videos & playlists Instructions

Tweet to @glassparrot

1. Ubuntu 21.10 "Impish Indri": Tested with ZFS, Snapshots & Backups!

2. Encrypted ZFS Ubuntu Installation

3. Comparison of file systems - ZFS Wiki

Top

containerd and docker TW Notebook

4. Builing ZFS on Raspberry Pi 3 running Rasbpian

5. ZFS Without Tears

6. Configuring ZFS Cache for High Speed IO

Top

Kingston A400 120G Internal SSD M.2 2280 SA400M8/120G - Increase Performance - Amazon Price:$21.99

M.2 SATA SSD to USB 3.0 External SSD Adapter Enclosure with UASP, Support NGFF M.2 2280 2260 2242 2230 SSD with Key B/Key B+M - Amazon Price:$13.59

https://linuxhint.com/configuring-zfs-cache/

7. ZFS / RAIDZ Capacity Calculator (beta)

8. Things Nobody Told You About ZFS

9. The Case For Using ZFS Compression

10. ZFS administration tool for Webmin

Top

https://github.com/jonmatifa/zfsmanager

ZFS_administration_tool_for_Webmin.pdf

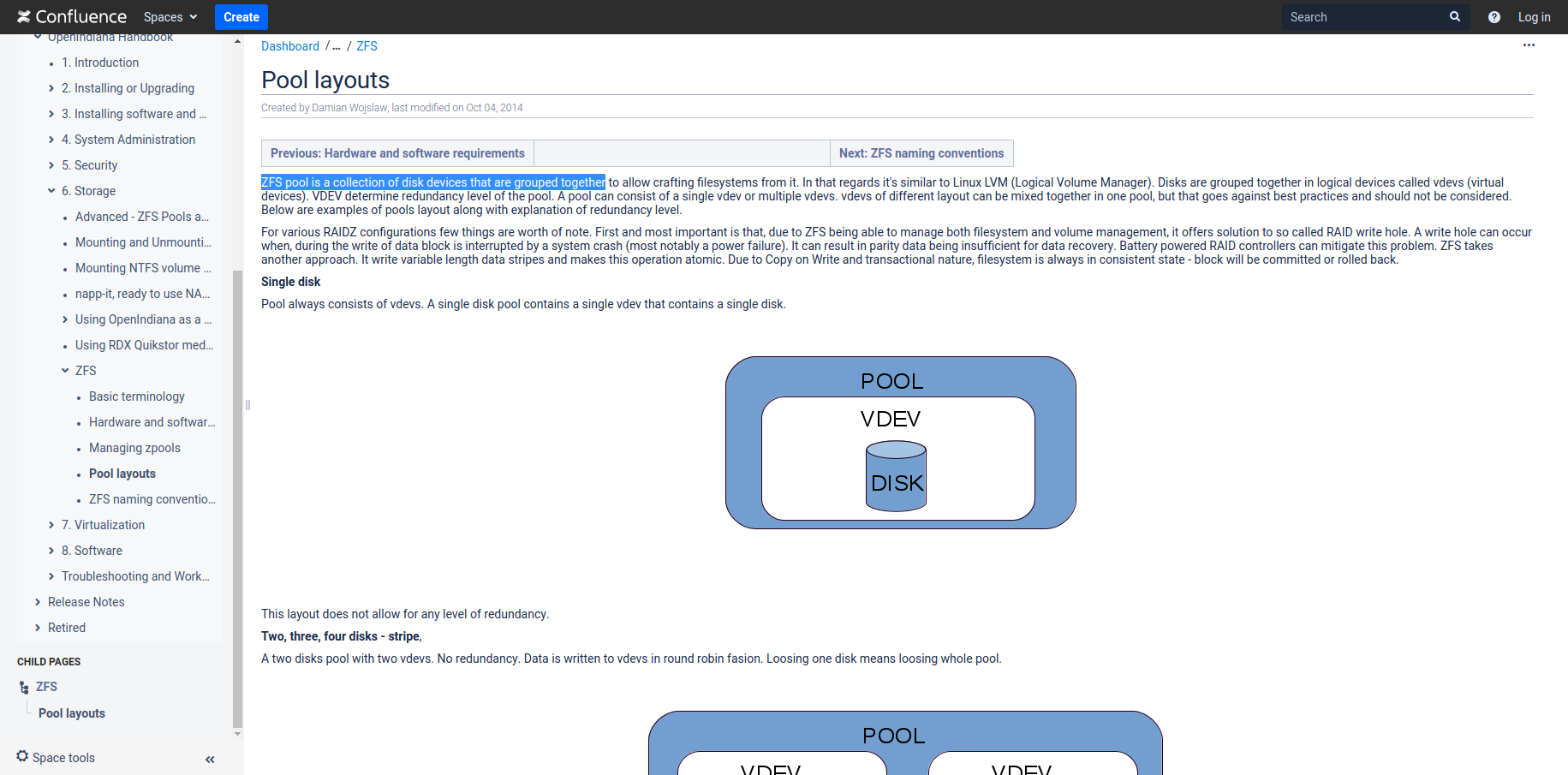

11. ZFS pool is a collection of disk devices that are grouped together

12. Interesting things you can do with ZFS

Top

https://www.youtube.com/watch?v=6Bo4vYgmVhk

Interesting things you didn't know you could do with ZFS

13. OpenZFS channel

14. ZFS Wiki

15. ZFS quick command reference with examples

16. Managing Devices in ZFS Storage Pools

17. Zfs playlist

18. ZFS Datasets dissappear on reboot

I have installed ZFS(0.6.5) in my Centos 7 and I have also created a zpool,

everything works fine apart from the fact that my datasets disappear on reboot.

I have been trying to debug this issue with the help of various online resources

and blogs but couldn't get the desired result.

After reboot, when I issue the zfs list command I get "no datasets available" , and

zpool list gives "no pools available" After doing a lot of online research, I

could make it work by manually importing the cache file using zpool

import -c cachefile, but still I had to run zpool set cachefile=/etc/zfs/zpool.cache Pool

before the reboot so as to import it later on after reboot.

This is what systemctl status zfs-import-cache looks like,

zfs-import-cache.service - Import ZFS pools by cache file

Loaded: loaded (/usr/lib/systemd/system/zfs-import-cache.service; static)

Active: inactive (dead)

cat /etc/sysconfig/zfs

# ZoL userland configuration.

# Run `zfs mount -a` during system start?

ZFS_MOUNT='yes'

# Run `zfs unmount -a` during system stop?

ZFS_UNMOUNT='yes'

# Run `zfs share -a` during system start?

# nb: The shareiscsi, sharenfs, and sharesmb dataset properties.

ZFS_SHARE='yes'

# Run `zfs unshare -a` during system stop?

ZFS_UNSHARE='yes'

# Specify specific path(s) to look for device nodes and/or links for the

# pool import(s). See zpool(8) for more information about this variable.

# It supersedes the old USE_DISK_BY_ID which indicated that it would only

# try '/dev/disk/by-id'.

# The old variable will still work in the code, but is deprecated.

#ZPOOL_IMPORT_PATH="/dev/disk/by-vdev:/dev/disk/by-id"

# Should the datasets be mounted verbosely?

# A mount counter will be used when mounting if set to 'yes'.

VERBOSE_MOUNT='no'

# Should we allow overlay mounts?

# This is standard in Linux, but not ZFS which comes from Solaris where this

# is not allowed).

DO_OVERLAY_MOUNTS='no'

# Any additional option to the 'zfs mount' command line?

# Include '-o' for each option wanted.

MOUNT_EXTRA_OPTIONS=""

# Build kernel modules with the --enable-debug switch?

# Only applicable for Debian GNU/Linux {dkms,initramfs}.

ZFS_DKMS_ENABLE_DEBUG='no'

# Build kernel modules with the --enable-debug-dmu-tx switch?

# Only applicable for Debian GNU/Linux {dkms,initramfs}.

ZFS_DKMS_ENABLE_DEBUG_DMU_TX='no'

# Keep debugging symbols in kernel modules?

# Only applicable for Debian GNU/Linux {dkms,initramfs}.

ZFS_DKMS_DISABLE_STRIP='no'

# Wait for this many seconds in the initrd pre_mountroot?

# This delays startup and should be '0' on most systems.

# Only applicable for Debian GNU/Linux {dkms,initramfs}.

ZFS_INITRD_PRE_MOUNTROOT_SLEEP='0'

# Wait for this many seconds in the initrd mountroot?

# This delays startup and should be '0' on most systems. This might help on

# systems which have their ZFS root on a USB disk that takes just a little

# longer to be available

# Only applicable for Debian GNU/Linux {dkms,initramfs}.

ZFS_INITRD_POST_MODPROBE_SLEEP='0'

# List of additional datasets to mount after the root dataset is mounted?

#

# The init script will use the mountpoint specified in the 'mountpoint'

# property value in the dataset to determine where it should be mounted.

#

# This is a space separated list, and will be mounted in the order specified,

# so if one filesystem depends on a previous mountpoint, make sure to put

# them in the right order.

#

# It is not necessary to add filesystems below the root fs here. It is

# taken care of by the initrd script automatically. These are only for

# additional filesystems needed. Such as /opt, /usr/local which is not

# located under the root fs.

# Example: If root FS is 'rpool/ROOT/rootfs', this would make sense.

#ZFS_INITRD_ADDITIONAL_DATASETS="rpool/ROOT/usr rpool/ROOT/var"

# List of pools that should NOT be imported at boot?

# This is a space separated list.

#ZFS_POOL_EXCEPTIONS="test2"

# Optional arguments for the ZFS Event Daemon (ZED).

# See zed(8) for more information on available options.

#ZED_ARGS="-M"

I am not sure if this is a known issue,.. if yes, Is there any workaround

for this? perhaps an easy way to preserve my datasets after reboot and

preferably without the overhead of an cache file.

linux zfs centos7 zfsonlinux

shareimprove this question

edited Oct 28 '15 at 11:09

asked Oct 28 '15 at 10:16

Vincent

143117

what zpool status -v and zpool import says? – ostendali Oct 28 '15 at 10:28

Hi, zpool status -v zpool status -v no pools available And, zpool import

gives me this pool: zfsPool id: 10064980395446559551 state: ONLINE action:

The pool can be imported using its name or numeric identifier. config: zfsPool

ONLINE sda4 ONLINE – Vincent Oct 28 '15 at 10:39

zfs import is how I could make it work, by setting the cachefile initially

using the set cachefile command – Vincent Oct 28 '15 at 10:40

you missed /etc/init/zpool-import.conf, can you post the content of that file

as well? – ostendali Oct 28 '15 at 11:19

1

Is the ZFS target enabled? systemctl status zfs.target – Michael Hampton♦

Oct 28 '15 at 12:44

show 6 more comments

3 Answers

active oldest votes

up vote

5

down vote

accepted

Please make sure the zfs service (target) is enabled. That's what handles pool

import/export on boot/shutdown.

zfs.target loaded active active ZFS startup target

You should never have to struggle with this. If you have a chance, run an update

on your zfs distribution, as I know the startups services have improved over the

last few releases:

[root@zfs2 ~]# rpm -qi zfs

Name : zfs

Version : 0.6.5.2

Release : 1.el7.centos

shareimprove this answer

answered Oct 28 '15 at 12:50

ewwhite

170k73356704

Hi, I had tested 0.6.5.3 as well which happens to be the latest release I believe,

but still faced this issue, with .6.5.3 I had to even run modprobe zfs everytime

I did a reboot to load the modules. Btw, Target is not enabled please check the

output in comments above(reply to Michael). May I know how to set one ? thanks.

– Vincent Oct 28 '15 at 13:02

All you need to do is probably something like: systemctl enable zfs.target – ewwhite

Oct 28 '15 at 13:19

add a comment

up vote

3

down vote

I also had the problem of the zfs disappearing after a reboot. Running CentOS 7.3

and ZFS 0.6.5.9 Reimporting brought it back (zpool import zfspool) only until the

next reboot.

Here's the command that worked for me (to make it persist through reboots):

systemctl preset zfs-import-cache zfs-import-scan zfs-mount zfs-share zfs-zed zfs.target

(Found this at: https://github.com/zfsonlinux/zfs/wiki/RHEL-%26-CentOS )

shareimprove this answer

edited Apr 5 '17 at 23:50

chicks

2,93771730

answered Apr 5 '17 at 22:28

Jeff

312

add a comment

up vote

1

down vote

ok, so the pool is there, which means the problem is with your zfs.cache, it is not

persistent and that is why it looses its config when your reboot. what I'd suggest

to do is to run:

zpool import zfsPool

zpool list

And check if the if it is available. Reboot the server and see if it comes back,

if it doesn't then perform the same steps and run:

zpool scrub

Just to make sure everything is alright with your pool etc.

Pls also post the content of:

/etc/default/zfs.conf

/etc/init/zpool-import.conf

Alternatively, if you are looking for workaround to this issue you can set it

of course as follow.

Change the value in from 1 to 0:

/etc/init/zpool-import.conf

and add the following to your /etc/rc.local:

zfs mount -a

That will do the trick.

19. Why use zfs for virtual machines??

20. Welcome to the FreeNAS Documentation Project!

Top

https://www.ixsystems.com/documentation/freenas/11.2-U6/freenas.html

FreeNAS_User_Guide.pdf

FreeNAS® How-To Tutorial Guides

How To Setup Shares, Groups & User Permissions in FreeNAS 11

Virtualization Tutorial: Configuring Citrix XenServer With FreeNAS & ISCSI For Storage